r1_8b

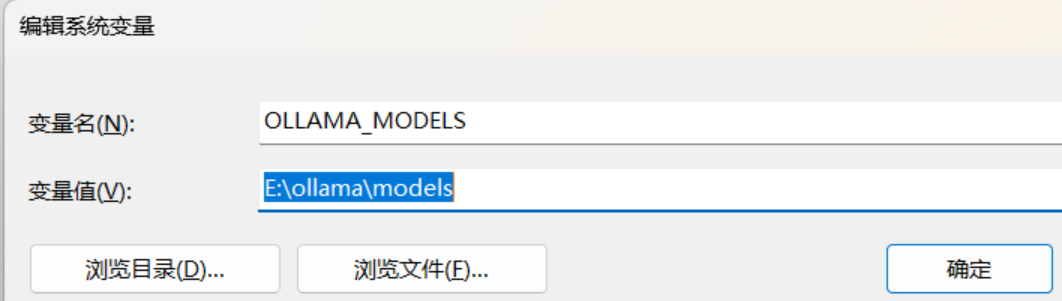

系统变量,对系统的所有账户生效,您可以根据情况自己选。点击添加添加,输入变量名OLLAMA_MODELS,变量值输入您放置ollama模型的新地址,我这里放在了E:\ollama\models

- 拉取模型

ollama pull deepseek-r1:8b

- 运行模型

ollama run deepseek-r1:8b

🔍 Can I run DeepSeek-R1:8B via Ollama?

Currently, Ollama does not officially support the DeepSeek-R1 8B model unless it has been added to their

repository or community-supported list. You should check:

- The Ollama models page for updates.

- Community forums (e.g., GitHub issues) if users have shared custom Docker images/definitions.

🧪 How to run transformer models like DeepSeek-R1 via Ollama

If the model is supported, you can use this command:

1 | ollama run deepseek-r1:8b |

This will:

- Download (if not installed) and load the 8B parameter version of DeepSeek-R1.

- Start a local instance for interactive chat or API access.

🤔 What is DeepSeek-R1:8B?

DeepSeek-R1 is an advanced transformer language model developed by 深度求索(DeepSeek) . The “8B” refers to 8 billion parameters in the model. It’s designed for:

- Natural language understanding and generation.

- Code-related tasks (syntax, debugging).

- Knowledge answering.

- Creative writing.

📄 Example usage with Ollama CLI

1 | # Start interactive chat (if supported) |

⚠️ If the model isn’t supported yet:

You can try running it via Docker (if you have technical access):

1 | docker run -d --name deepseek-r1 \ |

But note: This may not be officially maintained.

🌟 What else can Ollama run?

Oll (a) supports models like:

- Llama, Mistral, Mixtral

- GPT models (via LMSYS)

- Gemma, Phi, CodeLlama

- And many others!

Check the current list on their website.

Let me know if you’d like help with a specific task using DeepSeek-R1 or another model! 😊